EEF toolkit ‘more akin to pig farming than science’

Academics have dismissed a tool that aims to help schools use research evidence to spend the money earmarked for disadvantaged pupils as “extremely misleading and utterly unhelpful”.

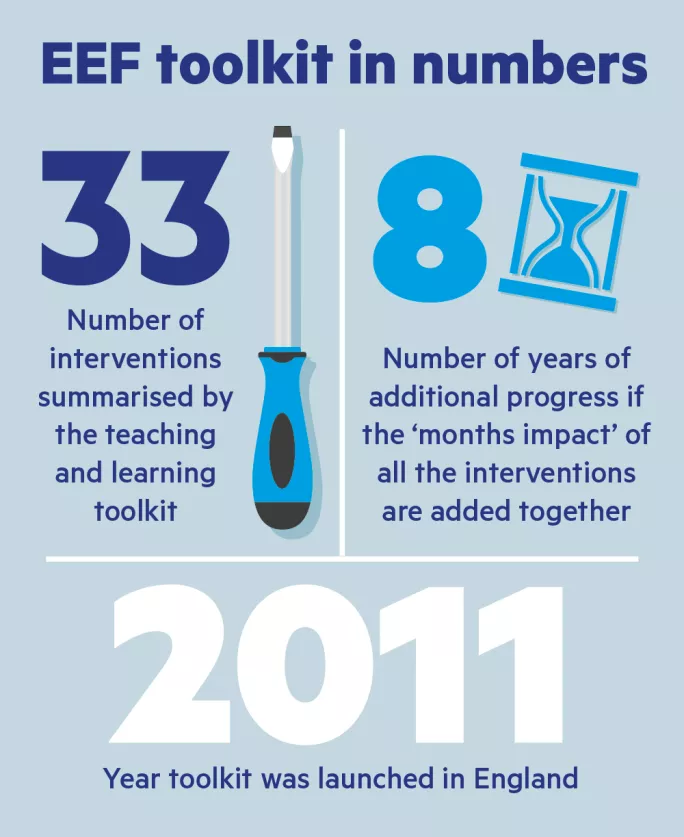

The Education Endowment Foundation’s (EEF) teaching and learning toolkit takes more than 10,000 studies and distils them into 33 interventions - from summer schools and homework to school uniforms and reduced class sizes.

For each intervention, it presents schools with the number of months of additional progress that pupils might make in an academic year, the cost and the strength of the evidence.

The tool was launched in England in 2011 and a Scottish version went live last month to lay the groundwork for the introduction of the pupil equity fund - the Scottish equivalent of the pupil premium.

However, academics have attacked the toolkit for applying the kind of input-output logic “that works in pig farming” to “the complex endeavour of education”. They also criticised it for reinforcing, through its focus on attainment, the idea that education is only about qualifications.

The academics - Terry Wrigley, a visiting professor at Northumbria University, and Gert Biesta, a professor of education and director of research in Brunel University London’s department of education - also accused the toolkit of “bundling together” very different studies, carried out in a range of contexts, to come up with the average number of months of progress gained, when some of the studies contradict the headline statistic.

Before the tookit, the chasm between research and classroom practice was huge

The danger is that schools could dismiss some interventions, such as lowering class sizes, based on the toolkit’s rating, according to Professor Wrigley.

While reducing class sizes is rated on the toolkit as a “moderate impact for high cost”, it has also been shown to be beneficial for more disadvantaged pupils.

Ultimately, Professor Wrigley said, if you added the months of progress attributed to the 30-plus categories in the toolkit together, you would get a total of more than eight years. “It would seem quite a ludicrous claim that, if you were to follow all these things, suddenly you would have five-year-olds behaving like 13-year-olds,” he said.

Making sense of research

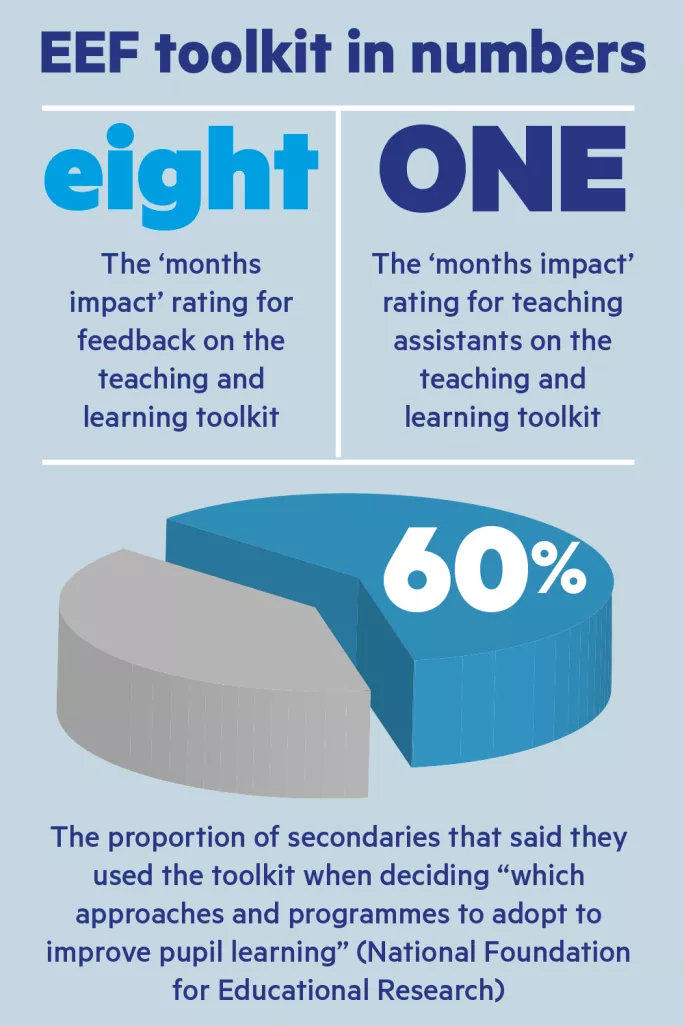

Some of the criticisms are echoed by Peter Blatchford, professor of psychology and education at the UCL Institute of Education (IoE), whose research into teaching assistants influenced their low rating in the toolkit. However, Professor Blatchford ultimately backs the toolkit for at least attempting to help schools “make sense of the evidence”.

His colleague at the IoE, David Gough, professor of evidence-informed policy and practice, and an expert in research synthesis and use, also said that the toolkit could be “misused”, but stressed that without the toolkit “we might just assume that certain approaches to education are effective”.

For its part, the EEF accepts much of the criticism levied: that the toolkit focuses on academic attainment; that it considers the impact of interventions on all children, not only those suffering from povertyrelated disadvantage; and that summarising a large body of evidence in a manageable amount of information comes with risks.

However, the risks of meta-analysis - of which the toolkit is one example - are outweighed “by the huge value of presenting such a large amount of evidence to schools and teachers in an accessible and practical way”, the EEF argues.

Danielle Mason, head of research at the EEF, said: “Before the toolkit was launched in 2011, the chasm between educational research and classroom practice was huge. Our aim is to help bridge that gap.”

However, Professor Wrigley argues that because the toolkit appears scientific, teachers are in danger of taking it at face value without appreciating the risks.

He told TES: “It looks like science, and when you present things in this way, busy people will think ‘we have got something here we can rely on’ without reading further and, in fact, it’s hard to read further because of copyright and licensing.”

Professor Biesta added: “The toolkit is extremely misleading and utterly unhelpful. Some major issues are the depiction of education as some kind of causal input-output system, with the idea of teaching as an intervention that is supposedly making some impact on the student - an impact that can then be measured through test scores. This may be a logic that works with pig farming, but not with the complex endeavour of education.”

You need a Tes subscription to read this article

Subscribe now to read this article and get other subscriber-only content:

- Unlimited access to all Tes magazine content

- Exclusive subscriber-only stories

- Award-winning email newsletters

- Unlimited access to all Tes magazine content

- Exclusive subscriber-only stories

- Award-winning email newsletters

You need a subscription to read this article

Subscribe now to read this article and get other subscriber-only content, including:

- Unlimited access to all Tes magazine content

- Exclusive subscriber-only stories

- Award-winning email newsletters

- Unlimited access to all Tes magazine content

- Exclusive subscriber-only stories

- Award-winning email newsletters